Posté par: Quentin Nivromont Il y a 1 mois, 1 semaine

➡️ This presentation is part of IRCAM Forum Workshops Paris / Enghien-les-Bains March 2026

1. The Stereo Paradox in an Immersive World

1.1. Stereo: A Spatial Encoding, Not Two Mono Channels

A stereo recording is not two independent audio signals. It is a correlated sound field encoded into two channels through deliberate inter-channel relationships — a principle established by Alan Blumlein in 1931 (UK Patent 394,325) and formalized through decades of psychoacoustic research (Blauert, Spatial Hearing, MIT Press, 1997; Rumsey, Spatial Audio, Focal Press, 2001).

At any given moment, the stereo signal encodes:

- Localizable sources — panned positions defined by Inter-channel Level Differences (ILD), perceived as phantom sources between the loudspeakers.

- Extended sources — spatial width conveyed through partial inter-channel correlation, perceived as sources with apparent source width (ASW).

- Diffuse content — ambience and reverberation encoded through low inter-channel coherence, contributing to listener envelopment (LEV).

These parameters are measurable: Inter-Channel Coherence (ICC), Inter-channel Intensity Difference (IID), and Inter-channel Phase Difference (IPD) are standardized in ISO/IEC 23003-1 (MPEG Surround). The stereo signal is not a simplification — it is a complete spatial encoding within the constraints of two channels.

1.2. The Hardware Has Outpaced the Content

Modern playback systems have far more loudspeakers than the two that stereo was designed for, yet the content pipeline remains overwhelmingly stereo. An estimated 97–99% of the world's recorded music catalog exists in stereo or mono format. Apple Music launched Spatial Audio with Dolby Atmos in May 2021 with "thousands of songs" — a fraction of its ~100 million track library (Apple Newsroom, May 2021). Streaming music, podcasts, broadcast content, and legacy archives are stereo.

The result: multi-speaker systems play stereo through two speakers while the rest sit idle. The investment in immersive hardware produces no spatial benefit for the vast majority of content.

1.3. The Missing Link

The challenge is clear: given that content is stereo and systems are multi-speaker, how do we bridge the gap faithfully?

Three categories of existing solutions each fail in specific ways:

Simple signal distribution (phantom, L/R÷2) — routing L and R to additional speakers with attenuation. This is mathematically incorrect: energy summation is wrong, comb filtering occurs between correlated signals on multiple speakers, and the spatial field is not reconstructed but merely replicated.

Spatialization systems (IRCAM Spat, L-ISA, d&b Soundscape) — these treat each input as a mono object with positional metadata. When a stereo pair is sent as two objects, the spatialization engine has no knowledge of their inter-channel relationship. It distributes L and R independently, destroying the correlated sound field, the encoded panning positions, and the diffuse/direct ratio. The result depends on the panning algorithm (VBAP, DBAP, Ambisonics, WFS), but none accounts for stereo inter-channel correlation.

FFT-based upmixers — frequency-domain analysis offers sophisticated spectral separation but introduces inherent artifacts, add latency and is quite heavy on cpu usage, wich make it unsuitable for live applications as well as in entry-level audio products.

The missing link is an algorithm that understands stereo as a spatial encoding and reconstructs the encoded sound field across any number of loudspeakers — without spectral artifacts, without fabricating spatial content, and without ignoring inter-channel relationships.

---

2. HSR: Design Philosophy and Architecture

2.1. Core Principle: Decode the Sound Field, Not the Channels

HSR (High Space Resolution) is built on a single premise: stereo is a spatial encoding that must be decoded before it can be rendered to multiple loudspeakers.

This is conceptually related to the primary-ambient decomposition framework established in the academic literature (Avendano & Jot, JAES, 2004; Goodwin & Jot, ICASSP, 2007; Faller & Breebaart, AES 131st Convention, 2011), but with a critical distinction: HSR operates entirely in the time domain, without FFT, windowing, or frequency-domain transforms.

The processing architecture has three stages:

2.2. Stage 1 — Inter-Channel Correlation Analysis

HSR continuously examines the relationship between L and R channels:

- Inter-channel level differences: identifying where energy is positioned across the stereo panorama.

- Inter-channel phase relationships: distinguishing coherent sources (high correlation) from diffuse content (low correlation).

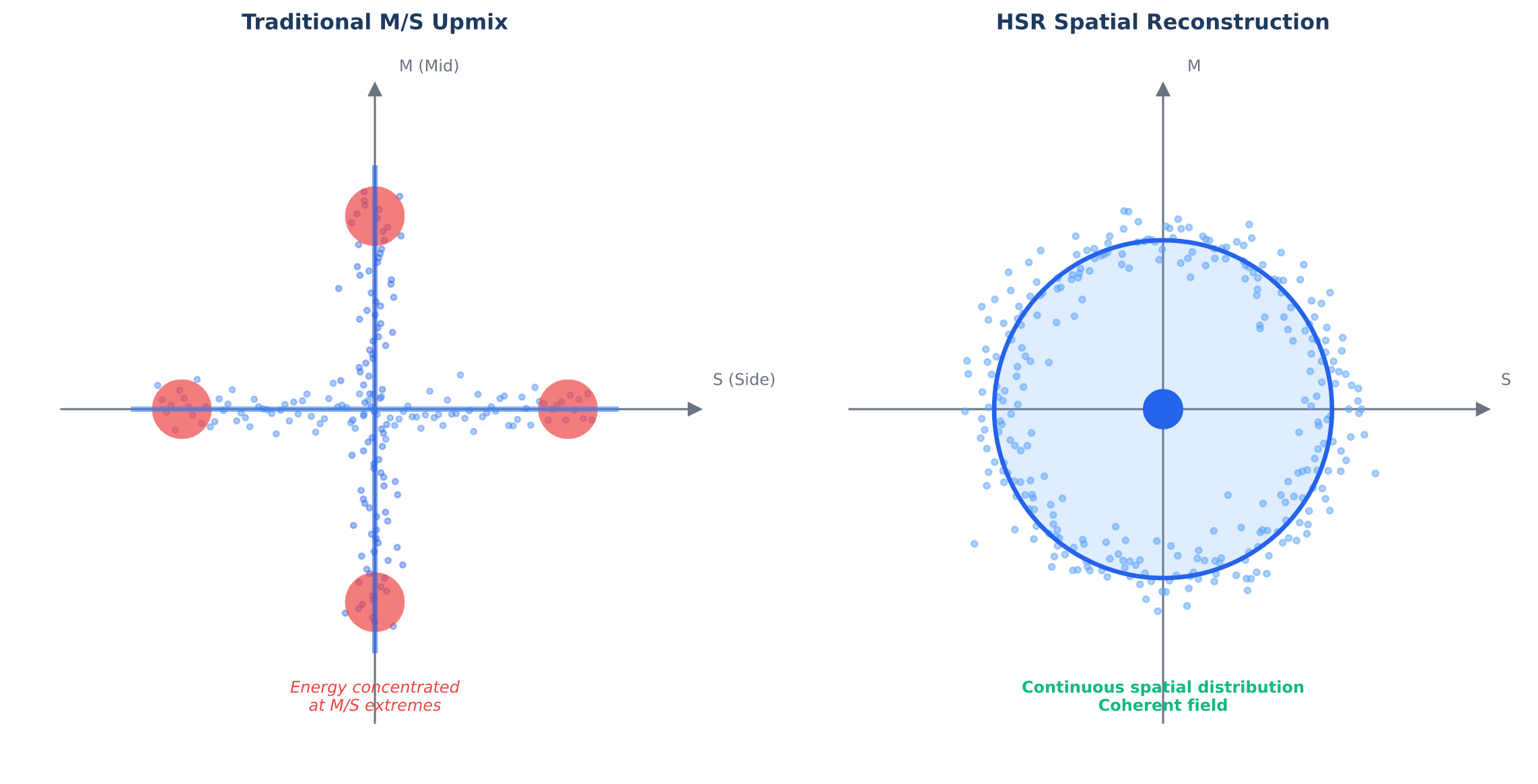

This analysis produces a continuous spatial map of the stereo field — not a discrete decomposition into "center" and "sides" (as in traditional Mid/Side processing), but a full distribution of energy across all panoramic positions.

2.3. Stage 2 — Spatial Extraction

From the correlation analysis, HSR extracts spatial components as a continuous distribution. Each extracted component carries its position in the original stereo panorama, its energy level, and its coherence characteristics.

2.4. Stage 3 — Output Distribution

The extracted spatial field is mapped to the target loudspeaker array. Each speaker receives a signal derived from the spatial components corresponding to its angular position, with:

- Energy conservation: total acoustic power is maintained (iso-energy processing). Content does not get louder when spread across more speakers.

- Spatial coherence preservation: correlated components remain correlated on the target array; diffuse components remain diffuse. No artificial decorrelation is added.

- Timbral neutrality: no redundant correlated signals on adjacent speakers, avoiding the comb filtering that plagues simple distribution methods.

The distribution adapts to any speaker configuration — symmetric or asymmetric.

3. HSR vs. Spatialization Systems: Complementary Tools

3.1. The Spatialization Paradigm

Modern spatialization engines — IRCAM Spat/SPAT Revolution (Carpentier, Noisternig & Warusfel, "Twenty Years of Ircam Spat: Looking Back, Looking Forward," 41st ICMC, 2015), L-Acoustics L-ISA, d&b Soundscape, Amadeus Holophonix — are designed for object-based audio. Each audio input is treated as a discrete point source with positional metadata, and the rendering engine calculates per-speaker amplitude coefficients using algorithms such as:

- VBAP (Vector Base Amplitude Panning — Pulkki, JAES, 1997): point-like virtual sources positioned via loudspeaker triplet gain calculation.

- DBAP (Distance-Based Amplitude Panning — Lossius, Baltazar & de la Hogue, SMC, 2009): no assumptions about speaker layout or listener position; useful for irregular arrays and installations.

- HOA (Higher Order Ambisonics — Gerzon, 1973; Daniel, 2000): scene-based encoding using spherical harmonics, decoded to any speaker configuration.

- WFS (Wave Field Synthesis — Berkhout, JAES, 1988): physical wavefront reconstruction using dense speaker arrays, eliminating the sweet spot.

These systems are powerful — but they are designed for mono source objects. When stereo content is introduced as two mono objects (L and R), the spatialization engine:

1. Has no information about inter-channel correlation.

2. Distributes L and R independently, ignoring their encoded spatial relationship.

3. Applies panning algorithms designed for point sources to what is actually a correlated sound field.

4. Produces output that may exhibit comb filtering, altered width, modified panning positions, and loss of envelopment — depending on the algorithm used and the source/speaker geometry.

3.2. The L-ISA Stereo Mapper

L-Acoustics recognized this problem and introduced the Stereo Mapper feature in L-ISA 3.0. The Stereo Mapper "maps existing stereo content to an immersive speaker configuration without changing the original artist's mix," distributing stereo content "while conserving a similar power distribution as traditional left/right array configurations to retain the original stereo image and overall mix." (L-Acoustics, 2025)

This is a practical acknowledgment that stereo cannot simply be fed to a spatialization engine as two mono objects. It is, in essence, an upmixing solution within a spatialization framework.

4.4. The Combined Approach: HSR + Spatialization

The most powerful configuration uses HSR as a preprocessing stage before a spatialization engine:

Stereo (2 ch) → HSR → N spatial components → Spat / L-ISA / Soundscape → Speakers

In this workflow:

1. HSR decodes the stereo field into N spatially coherent components (each component represents a portion of the continuous panoramic distribution).

2. Each component is fed to the spatialization engine as an independent object — but unlike raw L/R, each object carries spatially meaningful content with coherent positioning.

3. The spatialization engine applies its rendering algorithm (VBAP, Ambisonics, WFS) to objects that are already spatially decomposed, not arbitrarily split stereo channels.

4. An additionnal algortithm, like ICS (interfeence conacellation system), allow to remove the resulting comb-filtering that may still occur. We must notice that compared to feeding a stereo signal directly yo multiple spekaers using a solution like HSR reduce the comb-filtering effect.

The result: the fidelity of stereo-aware upmixing combined with the flexibility of object-based spatialization. The sound designer retains full control over spatial positioning while the stereo field's encoded spatial information is preserved rather than destroyed.

---

4. Application Domains

4.1. Live Sound

The live sound sector is where the stereo-to-immersive gap is most acute. Immersive systems — L-Acoustics L-ISA, d&b Soundscape, Amadeus Holophonix — are increasingly deployed in venues and touring productions. But the majority of playback content (backing tracks, DJ sets, pre-recorded sound effects, interval music) arrives as stereo.

HSR addresses this directly:

- Touring: the front-of-house engineer mixes in stereo (standard workflow, universal compatibility). HSR distributes the stereo mix across whatever speaker configuration exists at each venue — arena, theater, festival — with no per-venue preparation. Using multiple buses he can also change the space reproduction for any stem included in the master signal.

- Theatre: pre-recorded sound effects and playback tracks become spatial events that use the full installed system, without re-editing for multichannel.

- DJ performance: the DJ's stereo output feeds HSR, which expands it to fill main arrays, side fills, and ceiling speakers. The DJ works as always; the audience experiences spatial immersion.

The 5-sample latency is critical: live sound is latency-intolerant. Monitor systems, front-of-house alignment, and time-aligned delay towers all require sub-millisecond processing delays. HSR's 104 µs latency at 48 kHz is negligible in any live audio chain.

4.2. Automotive

Modern premium automotive audio systems feature a numerous number of speakers distributed across doors, dashboard, A-pillars, headliner, rear deck, and subwoofer enclosures. A solution like HSR allow to manage all those speakers, with an asymetrical repduction due to the main position of the driver.

4.3. Home Theater and Consumer Electronics

Soundbars (5–13 drivers), Atmos-enabled receivers (7.1.4, 9.1.6), and whole-home audio systems face the same content gap. HSR provides:

- Meaningful utilization of every driver in the system for stereo content.

- Center channel content derived from the stereo field (not a mono sum with comb filtering artifacts).

- Height speakers receiving spatially appropriate content (not disconnected ambience).

- Video synchronization guaranteed by sub-millisecond latency.

4.4. Broadcast

Broadcast facilities live in format mismatch: legacy archives are stereo, live feeds arrive stereo, international content varies. HSR provides artifact-free format conversion:

- No pre-echo on speech transients (critical for dialogue intelligibility).

- No musical noise during quiet passages.

- No spectral smearing on complex material.

- Real-time, on-air operation with broadcast reliability.

5. Spacelite: HSR in Practice

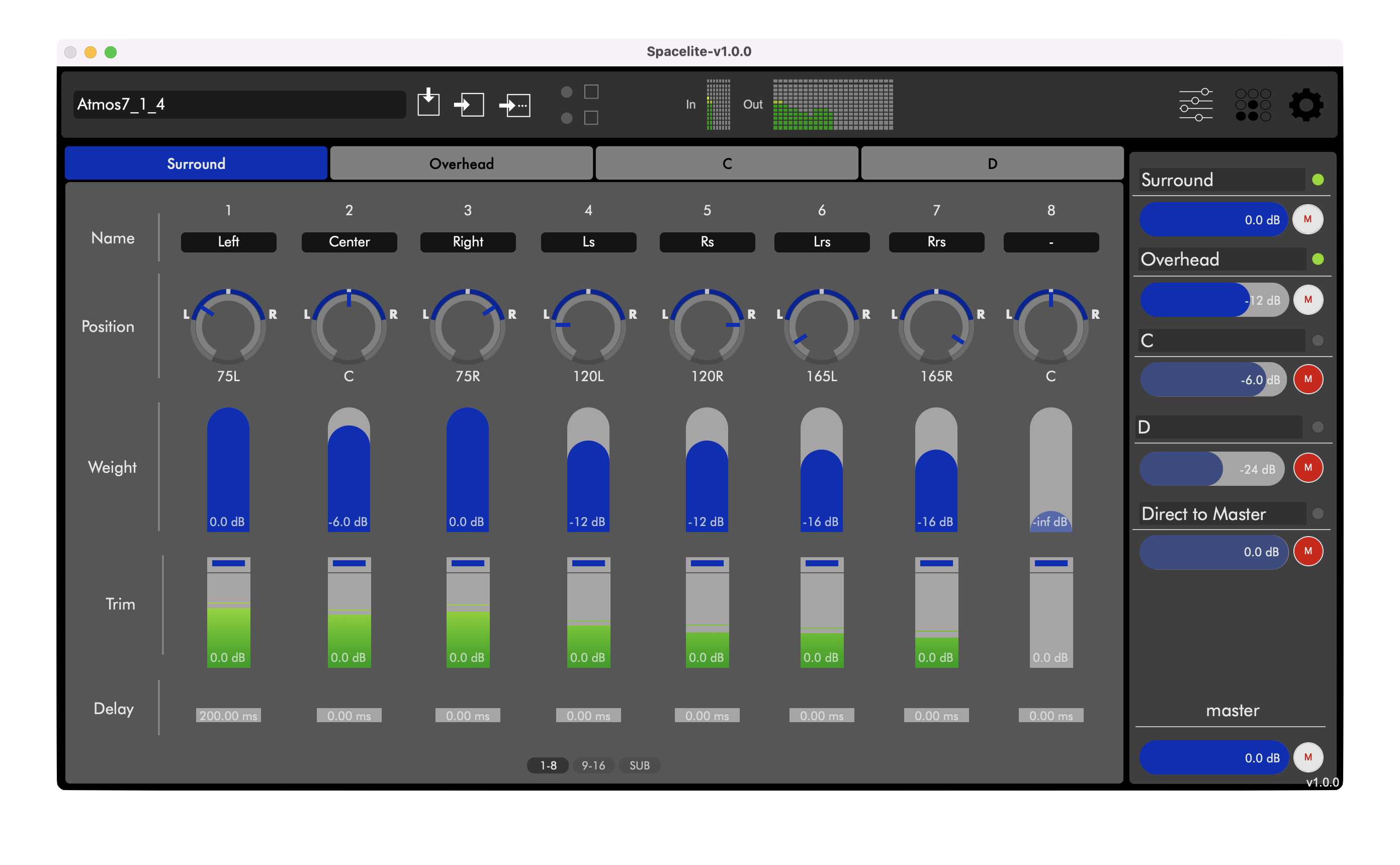

Spacelite is the software implementation of HSR, available as a standalone application for macOS and Windows.

HSR upmix engine : Stereo → any speaker configuration

4 stereo inputs : Mix multiple stems simultaneously

Full routing matrix : Per-channel weight, pan, and gain

Preset system : Save and recall complete configurations

MIDI/OSC control : External automation and integration

HCC algorithm bass management : Phase-aware subwoofer crossover

Spacelite is designed for immediate deployment: define your speaker positions, connect stereo sources, and the system produces spatial output in minutes. No spatial mixing expertise required; no content preparation necessary.

6. Conclusion: Upmixing as a Necessary Discipline

The audio industry is experiencing a fundamental asymmetry: playback systems have multiplied their speaker count while the content catalog remains overwhelmingly stereo. This gap cannot be solved by native immersive production alone — the economics and logistics of re-mixing decades of stereo content for multichannel are prohibitive, and new stereo content continues to be produced at vastly greater volume than native immersive content.

Upmixing is not a compromise. It is the technically correct solution to a real engineering problem: how to render a two-channel spatial encoding faithfully across a multi-speaker array. The quality of the solution depends entirely on the quality of the algorithm.

HSR addresses this problem from first principles:

- It treats stereo as what it is: a spatial encoding, not two mono signals.

- It operates in the time domain, eliminating the artifact classes inherent to frequency-domain processing.

- It preserves artistic intent by extracting and redistributing the spatial information that mix engineers encoded — not by fabricating new spatial content.

- It complements existing spatialization systems rather than competing with them — the combined HSR + Spat workflow demonstrates that upmixing and spatialization are not alternatives but complementary stages in the spatial audio chain.

The missing link in the immersive audio chain is not more speakers, more formats, or more metadata. It is a faithful stereo decoder. That is what HSR provides.

---

References

Standards

- ISO/IEC 23003-1 — MPEG Surround (Spatial Audio Coding), parametric stereo parameters (ICC, IID, IPD).

- ITU-R BS.775-4 (2022) — "Multichannel stereophonic sound system with and without accompanying picture."

- ITU-R BS.2051-3 (2022) — "Advanced sound system for programme production."

Patents

- Blumlein, A.D. — UK Patent 394,325, "Improvements in and relating to Sound-transmission, Sound-recording and Sound-reproducing Systems," filed 14 December 1931, accepted 14 June 1933.

Peer-Reviewed Publications

- Avendano, C. & Jot, J.-M. — "A Frequency-Domain Approach to Multichannel Upmix," *J. Audio Eng. Soc.*, vol. 52, no. 7/8, pp. 740–749, 2004.

- Berkhout, A.J. — "A holographic approach to acoustic control," *J. Audio Eng. Soc.*, December 1988.

- Berouti, M., Schwartz, R. & Makhoul, J. — "Enhancement of speech corrupted by acoustic noise," *Proc. ICASSP*, 1979.

- Blauert, J. — *Spatial Hearing: The Psychophysics of Human Sound Localization*, revised edition, MIT Press, 1997. [Open Access](https://direct.mit.edu/books/oa-monograph/4885/Spatial-Hearing)

- Carpentier, T., Noisternig, M. & Warusfel, O. — "Twenty Years of Ircam Spat: Looking Back, Looking Forward," 41st International Computer Music Conference, 2015. [ResearchGate](https://www.researchgate.net/publication/298982788)

- Faller, C. & Breebaart, J. — "Binaural Reproduction of Stereo Signals Using Upmixing and Diffuse Rendering," AES 131st Convention, 2011.

- Gerzon, M.A. — "Periphony: With-Height Sound Reproduction," *J. Audio Eng. Soc.*, vol. 21, no. 1, pp. 2–10, 1973.

- Goodwin, M. & Jot, J.-M. — "Primary-Ambient Signal Decomposition and Vector-Based Localization for Spatial Audio Coding and Enhancement," *Proc. ICASSP*, 2007.

- Goodwin, M. & Jot, J.-M. — "Spatial Audio Scene Coding," AES 125th Convention, 2008. [AES E-Library](https://www.aes.org/e-lib/browse.cfm?elib=14334)

- Lossius, T., Baltazar, P. & de la Hogue, T. — "DBAP — Distance-Based Amplitude Panning," *Proc. SMC*, 2009.

- Painter, T. & Spanias, A. — "Perceptual coding of digital audio," *Proc. IEEE*, vol. 88, no. 4, pp. 451–515, 2000.

- Pulkki, V. — "Virtual Sound Source Positioning Using Vector Base Amplitude Panning," *J. Audio Eng. Soc.*, vol. 45, no. 6, pp. 456–466, 1997. [AES E-Library](https://aes.org/publications/elibrary-page/?id=7853)

- Rumsey, F. — *Spatial Audio*, Focal Press / Routledge, 2001.

- Vickers, E. — "Fixing the Phantom Center: Diffusing Acoustical Crosstalk," AES 127th Convention, Paper 7916, 2009.

- Zotter, F. & Frank, M. — *Ambisonics: A Practical 3D Audio Theory*, Springer, 2019. [Springer](https://link.springer.com/book/10.1007/978-3-030-17207-7)

Spatialization Systems Documentation

- IRCAM / FLUX:: Immersive — [SPAT Revolution Documentation](https://doc.flux.audio/spat-revolution/Spatialisation_Technology_Panning_Algorithms.html)

- L-Acoustics — [L-ISA Immersive](https://www.l-acoustics.com/products/l-isa-immersive/)

- L-Acoustics — [L-ISA 3.0 Stereo Mapper](https://www.l-acoustics.com/press-releases/l-acoustics-launches-l-isa-3-0-the-most-powerful-and-accessible-immersive-audio-platform-for-live-audio-professionals-and-music-creators/)

- d&b audiotechnik — [Soundscape](https://www.dbaudio.com/global/en/solutions/soundscape/)

- Amadeus — [Holophonix](https://music-group.com/holophonix/)

Industry Sources

- Apple Newsroom — [Apple Music Announces Spatial Audio and Lossless Audio](https://www.apple.com/newsroom/2021/05/apple-music-announces-spatial-audio-and-lossless-audio/), May 2021.

- vrtonung.de — [Dolby Atmos Car Spatial Audio — Overview of Automotive Brands](https://www.vrtonung.de/en/dolby-atoms-for-cars-automotive-brands-spatial-audio-overview/)

- DAM Audio — [HSR Upmix Technology](https://www.dam-audio.com/research/hsr-upmix-technology)

- DAM Audio — [Spacelite](https://www.dam-audio.com/spacelite-standalone)

Partager sur Twitter

Partager sur Facebook

Commentaires

Pas de commentaires actuellement

Nouveau commentaire